Prompt Research

Make the actual usage purposes of LLMs visible. Prompt Research reveals real prompt patterns from millions of queries - scientifically reproduced, cross-model consistent, and exclusively licensed through Rankscale.

Trusted by 1000+ active users from brands and agencies worldwide

Why trust Prompt Decoding?

Exclusively licensed through Rankscale, Prompt Decoding is the only method that has been scientifically reproduced, cross-model validated, and recognized by the professional community.

Scientific reproducibility

Results independently validated in the OpenAI/Harvard study (NBER Working Paper 34255). Usage purposes and clusters were partially word-identical to Prompt Decoding findings published months earlier.

Cross-model consistency

Applied to both ChatGPT and Gemini, producing very similar clusters across models. Your strategy is not tied to a single vendor.

Community recognition

Presented at the G50 Summit 2025 by Hanns Kronenberg and won 3rd place at the SEO World Championship. Validated by the global SEO community.

Methodological basis

Relies on internal model simulations - revealing realistic prompt data from millions of real queries. Reproducible, privacy-compliant, and without personal data.

On which data is Prompt Decoding based?

Prompt Search Volume uses semantic reconstruction - estimating how often a given intent exists within the model's learned distribution. Think of it as the LLM equivalent of search volume.

Scientific Reproducibility

Rankscale delivers Prompt Decoding results that were independently validated in the OpenAI/Harvard study “Who People Use ChatGPT” (NBER 34255, Sept 2025). Central usage purposes and clusters identified in that study were already visible and published in April 2025 through Prompt Decoding-underscoring the methods quality and validity.

Cross-Model Consistency

Rankscale applies Prompt Decoding across ChatGPT and Gemini. Both models produce very similar clusters with this method and describe the same markets-so you get consistent, comparable insight across the engines that matter.

Methodological Basis

Rankscale uses Prompt Decoding based on internal model simulations in ChatGPT and Gemini. The analysis reveals realistic, representative prompt data from millions of real prompts; typical questions, frames, and answer paths become visible-reproducible, privacy-compliant, and without personal data.

Why Use Prompt Research?

Rankscale delivers Prompt Research exclusively: scientifically reproduced, cross-model consistent, methodologically sound, and recognized by the professional community. Make LLM usage purposes visible without tracking real users.

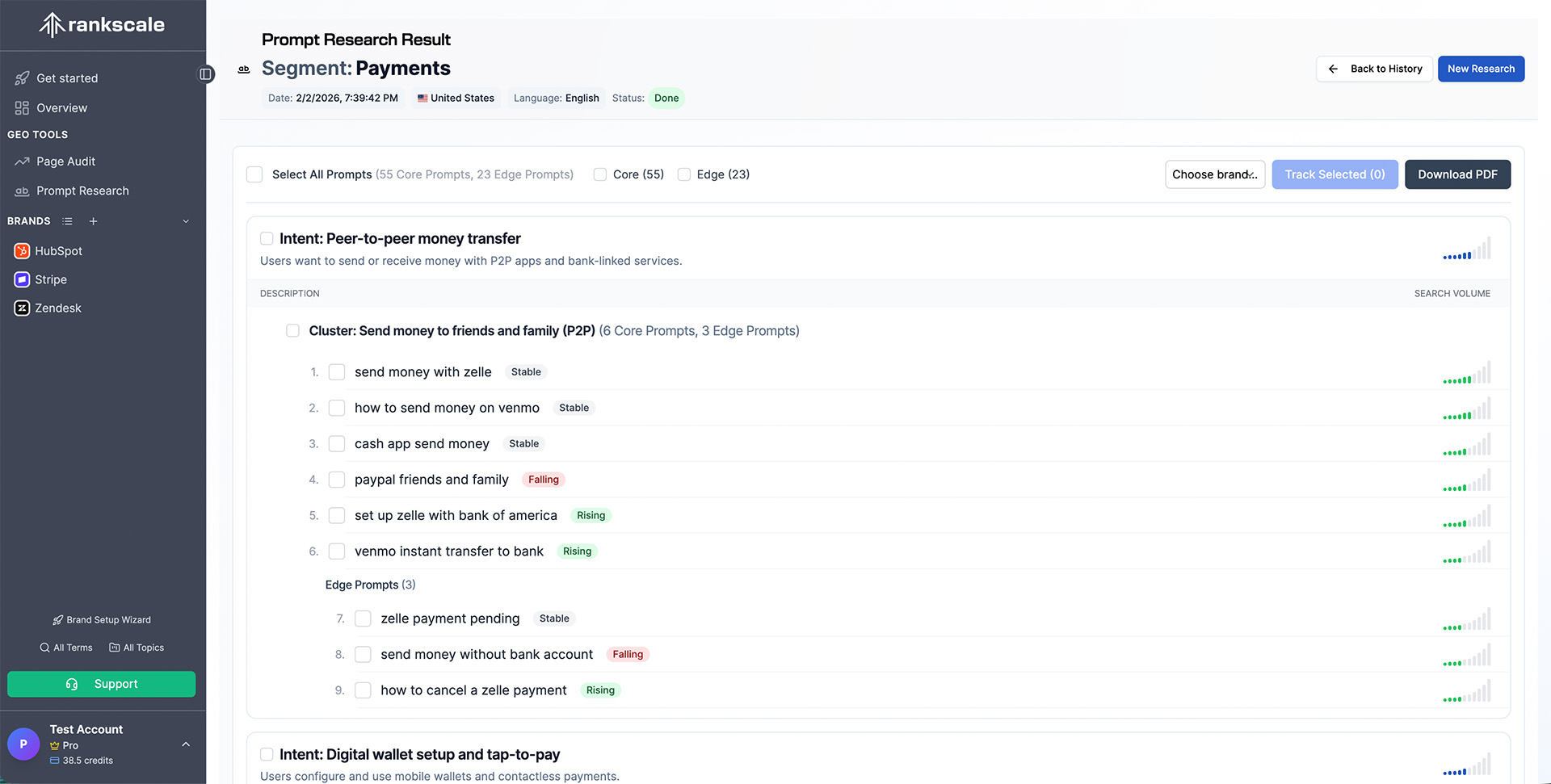

Intents

Giving back strongest intents and clusters for a segment - understand what users are really asking AI engines about your market.

Prompts

Core prompts with trends - see which questions drive AI conversations in your space and how they evolve over time.

Entity

Entity Impression Share - measure how often your brand entity appears relative to competitors across AI engine responses.

“Rankscale is on the cutting edge of LLM monitoring tools.”

Senior Digital Marketing Manager

Related features

Explore more ways to monitor and improve your AI visibility.

Frequently Asked Questions

Rankscale answers common questions about Prompt Research and Prompt Decoding.

Rankscale licenses Prompt Decoding, a method developed by Hanns Kronenberg to make the actual usage purposes of large language models visible. It is based on millions of real prompts and relies on internal model simulations in ChatGPT and Gemini-reproducible, privacy-compliant, and without personal data.

Still have questions? Contact our team